UNDERSTANDING CONTEXT

Gartner has declared 2026 as the year of context. Come read allabout context.

UNDERSTANDING CONTEXT

By W H Inmon

Jessica Talisman

“The answer is 7”

What does this statement tell you? It says that something has been counted and there were 7 of them. But it does not tell you what there are seven of –

Seas of the ocean

Days of the week

Dwarfs that attended Snow White

Dollars

And so forth.

Strictly speaking – taken by itself – the statement is almost meaningless. In fact, ANY number without context is practically meaningless.

In order to convey meaning, a number or any other piece of data has to have context attached to or otherwise associated with it.

STRUCTURED CONTEXT

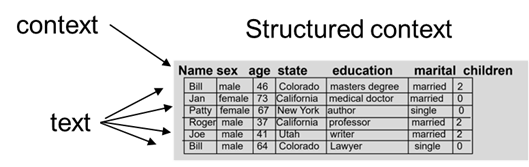

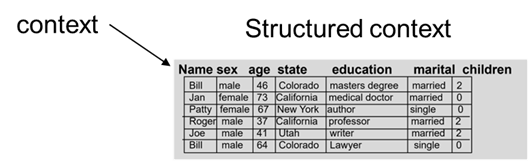

Structured data always has context attached to it. In structured data there are data bases, keys, indexes attributes, and so forth that allow context to be attached to data. And these structured facilities are determined before the first data flows into it. So finding the context of structured data is so simple as to be taken for granted. It is simply always there.

Consider the simple structured table shown below.

In this simple structured table, it is seen that there are columns that contain information about –

Name

Sex

Age

State

Education

Marital status

Number of children

In every column of data, context defines the type of data that resides in the column. It would not be normal for doghouse to be found in the education column or mayonnaise to be found in the column for sex or age. The column name is context that defines the content of the column.

The context of structured data is determined at the moment structured data is formed. And the format and context is rigidly enforced. As such, context of structured data is straightforward and relatively simple.

Context in the world of unstructured data – text – is very, very different from context in the structured world.

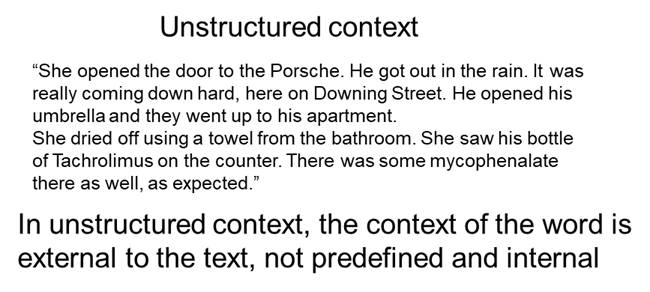

UNSTRUCTURED CONTEXT

Whereas context in the structured environment is open, obvious and well defined, context of text is anything but open and obvious. There is a need for context of text for both words and acronyms. In unstructured context, the context of the word or acronym is external to the text, not predefined and is internal to the text itself as is the case with structured data. In other words, the analyst needing to determine the context of text or acronyms must ferret out – infer - the context using several techniques.

There are many ways that context of text can be determined. One method for determining the context of text is through what can be called immediate adjacency.

IMMEDIATE ADJACENCY

Consider the phrase – Dallas Cowboys. When a person hears the term, they think of a once great professional football team in Texas. But if the word Dallas is found on one page in isolation, the person thinks of a city in north Texas and if the world cowboy is found three pages later the person thinks of a man on a horse with a hat, a rope, and a pistol on his hip. It is only when the words are immediately adjacent to each other that the term takes on the meaning of a professional football team.

Immediate adjacency then has lent a new meaning altogether to words that mean something else independently placed. In many ways immediate adjacency of words is the simplest form of context inference.

SENTENTIAL CONTEXT

Another form of context of text is context that exists in the same sentence but is not immediately adjacent. As a simple example of sentential context, consider the simple sentence shown below -

When a person reads the sentence, it is understood that the tiger being referred to is an animal, not Tiger Woods. Words occurring in the same sentence is a very powerful form of inferring context.

REFERENTIAL CONTEXT

But there is context of text that can be inferred that lies beyond words appearing in the same sentence. Consider a broader method by which context is inferred. Consider the following sentence.

In this sentence, the word fire is inferred to mean the pulling of the trigger of a gun. This is because gun is mentioned in the same group of words, but not in the sentence that the word fire appears in. In this case context is inferred. The physical juxtaposition of the words outside of a sentence can be used to infer context as well.

The challenge is selecting which words infer context. It is entirely possible that there may be conflicting types of context that may have been inferred by looking over a large amount of words.

The further context moves away from the word being examined, the greater the chance that context will not be inferred properly.

GENERAL CONTEXT

But there is an even broader means of inferring context. An entire document may have context. For example, consider the IRS tax code. All text found in the IRS tax code will have some relationship – either direct or indirect – with federal taxation.

And as with other forms of context, the broader the contextual reference from the word being examined, the greater the chance that the word will be interpreted incorrectly.

CONTEXT RESOLUTION

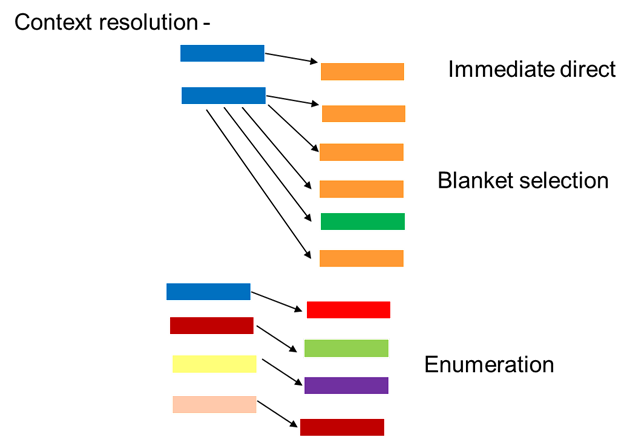

One approach to context inference is to apply the rules used for inference very time the word appears. This approach is very costly in terms of the resources required for processing. Another approach is to determine the contextual inference the first time the word appears and to apply that context to all further occurrences of the word in a document.

While not perfect, this approach is efficient and works well for most bodies of text.

Using the implied inference for all subsequent appearances of a word can be called context resolution.

When the text in a document is being parsed and meaning is attached to the words being processed, how is the context of the word resolved?

The simplest resolution occurs when there is immediate context. Although it is possible that a mistaken resolution can occur, immediate adjacency of words has a very high degree of proper and accurate resolution.

The second way words can have the inference of their context resolved is to simply say that all occurrences of a word have the same context. In many cases this is true. But it is not universally true.

For example, suppose a document has the word plant that is found in the document. In almost every case the word plant refers to some sort of botanical structure – a tree, the grass, a shrub, etc. However, in one place in the document the word plant refers to a factory location where manufacturing is being done.

In this case the context of plant has been inferred and resolved incorrectly.

A third way a resolution of text can be done is for each occurrence of a word to have its own contextual resolution. The context of each word is enumerated.

A simple example might be –

Water – water the lawn

Water – ocean

Water – have a sip

Water – the baby is coming any minute now

And so forth

RESOLUTION TRADEOFFS

The challenge with the enumerated approach is that it is very expensive to do. For a large document it is prohibitively expensive to consider.

CONTEXT RIGIDITY

The context of structured data is highly rigid. That is the nature of structured data.

But the context of unstructured data – text – is highly variable. The context can be anything. Typical forms of context for unstructured data include –

Time – when was the word said or what time does the word refer to

Location – where was the word said or where does the word refer to

Cost – how expensive was the word being analyzed

Speaker – who spoke the word that is being analyzed

INTONED CONTEXT

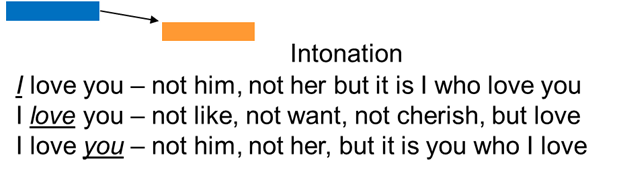

But the complexity of context management in the world of text goes further than the appearance of words found together. Intonation of words also conveys meaning.

Take the simple expression – I love you.

If the emphasis is on – I – the implication is that it is me who loves you, not John, Terry, or Martha. If the emphasis is on – love – the implication is that my feelings for you are love, not like, cherish or detest. If the emphasis is on – you – the implication is on that fact that it is you that I love, not Mary, Susie, or Claudia.

Even though the words are exactly the same, the intonation and speed with which the words are spoken have a great deal of influence on how the words are understood.

CONTEXT COMPLEXITY

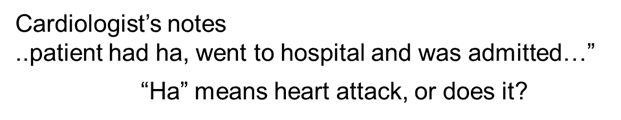

And even in the best of circumstances there can be misinterpretations. For example, how would you interpret the meaning of “ha” in the notes of a cardiologist?

In almost every case the cardiologist is referring to a heart attack when he/she uses the expression. But there is absolutely no reason why the cardiologist might be referring to a headache, not a heart attack.

CONTEXT DEPENDS ON CONTEXT

Context complexity reveals a spectrum of proximity, where one type of context cannot exist without context dependencies. Each layer of context requires its own contextual scaffolding to function. Immediate adjacency means nothing without knowledge of the language. Sentential context collapses without shared semantic conventions. Referential context demands a model of the world. General context presupposes domain knowledge accumulated over years of exposure. Context, in other words, does not interpret itself. Context requires context.

This is where the engineering of meaning is hard. The challenge is less about identifying context and demands knowing which context governs in any particular instance. A cardiologist’s “ha” is ambiguous at multiple levels—there’s the abbreviation presenting as an acronym, the clinical register in which it is utilized, the documentation conventions of the institution and the habits of medical practitioners using ha. Resolving the right meaning requires traversing all of these layers in the correct order. Collapse any single layer, and the wrong meaning propagates onwards.

INSTITUTIONAL CONTEXT

Among the most consequential and least visible forms of context is institutional context — the rules, roles, conventions, and assumptions that govern how language is used within an organization. A term that means something inside one institution can be meaningless or misleading outside it. “Admitted” means something specific in a hospital. “Closed” means something specific in a law firm. “Released” means something specific in a prison. These words carry meaning as stipulated by the organizational framework in which they appear. Institutional context is the water that specialized language swims in. It is almost always invisible to anyone who has not been inside the institution — and almost always unrepresented in the data.

PROVENANCE CONTEXT

Context is also shaped by who is speaking, under what conditions and with what authority. This is provenance context — the record of origin that determines how an utterance should be weighted and interpreted. The same clinical notation carries different interpretive force depending on whether it was written by an attending physician, a resident, nurse or a medical student. The same financial forecast means something unique depending on whether it comes from an internal analyst or an external auditor. Provenance changes everything about how the words are understood. In the absence of provenance, interpretation defaults to assumptions that may be entirely wrong or misinterpreted.

The structured world handles interpretive ambiguity through enforced schemas. Column names constrain values while data types constrain columns and relational constraints control table logic. Structure is, at its core, pre-committed context. Database schemas work because the interpretive framework is declared before the data arrives. The brittleness of structured data — its inability to absorb the unexpected — is the price paid for exacting contextual clarity.

Unstructured text pays the opposite price. Nothing is pre-committed. Context must be inferred, assembled, and continuously re-evaluated. And so strategies for resolving entities described above require immediate inferences, uniform assumptions and full enumerations. But the strategies for handling unstructured text relies upon how much contextual infrastructure you are willing to build and maintain. For example, an enumerated approach is expensive because it treats each word as its own contextual problem. The expense becomes the true cost of meaning.

Data management cannot make context tractable because it only handles structure, storage, and retrieval. Data management on its face does not handle meaning. Meaning is the province of knowledge management and information management — disciplines that are fundamentally social, not technical. Knowledge does not exist in databases, as knowledge involves people and people exist in communities of practice. Within communities of practice, shared conventions allow one professional to understand another without spelling everything out. Information management is the work of making that social knowledge legible, transferable, and reliable across people and over time. It requires governance, curation, editorial judgment, and institutional memory. None of these are properties of data. All of them are properties of human organizations.

This is where AI systems are most exposed. A language model trained on vast amounts of text has absorbed enormous amounts of contextual signals. But signal is not the same as understanding and pattern is not the same as meaning. The model has no institutional memory and carries no provenance. It cannot distinguish between a notation written by a senior clinician with thirty years of practice and one written by a first-year resident on an overnight shift. It has processed the words without inheriting the social fabric that gives the words their weight. Context exists in the negative spaces surrounding text, behind the text and beneath the text. Machines trained only on text inherit none of that surrounding structure unless humans deliberately put it there.

The irreducible complexity of context points to something deeper than an engineering problem. Human beings cannot resolve contextual ambiguity through enumeration or statistical inference alone. Humans are an integral part of context interpretation and derive understanding from lived experiences, cultural knowledge, embodied knowledge and social awareness—all facets that evade capture in formal systems.

The cardiologist who writes “ha” has internalized a clinical world — its institutional rhythms, its provenance chains and its unwritten conventions. When the cardiologist’s notation is flagged as ambiguous, the residuals of interpretation are revealed, which formal systems don’t capture. The residuals are the hallmarks of meaning that lives in human practice.

Machines can improve how they document, record and represent context but must rely upon humans to understand and model context. Context is a human-led social agreement that must be maintained. Humans decide what counts as context and what constitutes knowledge. These are not decisions that can be automated away or delegated to a model. They require governance, judgment, and the kind of institutional accountability that only human organizations can provide. The work is sociotechnical by nature— the deliberate alignment of human knowledge practices with the systems built to extend them.

Context will always depend on context.

ABOUT THE AUTHORS

W.H. Inmon is widely recognized as the father of the data warehouse and has spent decades helping organizations structure and leverage their data assets for analytics and decision-making. Bill has sold over 1,500,000 books in his life

Jessica Talisman is a semantic infrastructure consultant and knowledge organization expert specializing in ontology development, controlled vocabularies, and enterprise semantic architectures that bridge library science principles with modern AI and knowledge management systems. Jessica created the Ontology Pipeline, a framework for building semantic knowledge infrastructures and ontologies.

Some of Bill’s latest books include –

DATA ARCHITECTURE – BUILDING THE FOUNDATION, with Dave Rapien, Technics Publications.

STONE TO SILICON – A HISTORY OF TECHNOLOGY AND COMPUTERS, with Dr Roger Whatley, Technics Publications.

Articles and presentations by Jessica can be found on Linkedin, Substack and Youtube.