MONETIZING GOVERNANCE: TRANSFORMING RAW DATA INTO MONETIZABLE ASSETS IN THE AI ERA

Knowledge Governance: Transforming Raw Data into Monetizable Assets in the AI Era

By

Derek Strauss (Gavroshe USA, Inc.) and Alan Rodriguez (Data Freedom Foundation)

Executive Summary

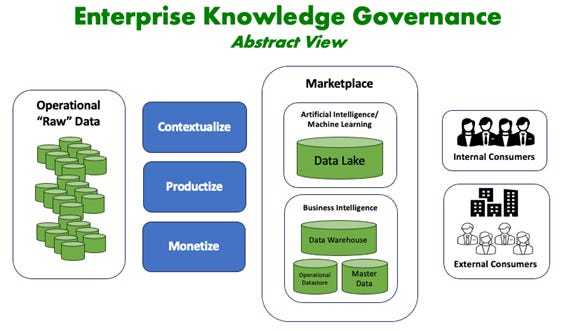

In the modern business landscape, organizations are inundated with vast amounts of data generated from customer interactions, operational processes, and external partnerships. Despite this abundance, many struggle to convert this data into actionable insights and tangible financial gains. The challenges they face include data overload—the exponential growth of data makes it difficult to manage and extract meaningful information. Siloed systems trap data in disparate systems across departments, hindering a unified view. Unrealized value arises when investments in data technologies don’t always translate into financial returns due to human factors, technical complexities, and mounting ‘data debt’. Governance risks add layers of complexity and risk with compliance to evolving data privacy and security regulations.

To address these challenges, AI-driven Knowledge Governance emerges as a transformative framework. It unites emerging AI governance and traditional data governance to transform raw data into actionable knowledge, driving significant financial returns. Central to this is the Data Liquidity Protocol (DLP), which revolutionizes data governance by embedding cryptographic proofs directly into data products, ensuring they are verifiable, secure, compliant, and monetization-ready. DLP binds five essential cryptographic proofs to every data product: Proof of Integrity for provenance and quality, Proof of Ownership for enforceable control, Proof of Security for layered protection, Proof of Compliance for privacy-preserving adherence to regulations, and Proof of Value for measuring ROI from every data use case.

By combining Knowledge Governance with DLP, organizations can automate compliance, manage risk, enhance data quality, unleash innovation, and unlock new revenue streams. This synergy allows transformation of raw data into valuable, marketable assets without exposing sensitive information or violating regulatory standards.

The process unfolds in three key phases:

Contextualization: Utilize AI tools to refine and structure raw data, adding context and meaning. This involves AI-driven modeling to organize data into meaningful categories, data mapping to link data through taxonomies and ontologies with every siloed instance of every critical data element, and continuous refinement to ensure data quality and relevance through profiling and lineage tracking.

Productization: Convert structured knowledge into reusable and scalable data products. Key steps include data cleansing to finalize quality, accuracy, and readiness; product design leveraging AI to create user-friendly and valuable data products; connectivity to ensure interoperability across systems and platforms; and deployment to integrate data products into workflows for real-time use.

Monetization: Deploy data products to generate financial value through internal monetization, enhancing operational efficiency and reducing costs by utilizing data products within the organization, and external monetization, generating new revenue streams by selling or licensing data products to customers, partners, or through data marketplaces.

Implementing Knowledge Governance provides a significant competitive advantage: accelerated time to value through AI automation, new revenue streams by unlocking underutilized data, and improved decision-making with real-time, predictive insights. Key benefits include financial asset creation by systematically transforming data into monetizable knowledge assets, enhanced governance and compliance with real-time adherence to regulations, faster insights for immediate decision-making, and measurable ROI to demonstrate financial returns on data investments.

Combined with AI tools like knowledge graphs, agentic systems, and large language models, this enables proactive governance, automated compliance, enhanced trust, and new revenue streams—positioning data as a core profit center rather than a cost.

Transforming Data Governance with AI

Traditional data governance often struggles with inefficient practices due to manual processes, siloed data systems, and reactive approaches to risk and compliance. These limitations hinder the ability to derive timely insights and fully capitalize on data assets. Siloed systems scatter data across departments and platforms, leading to fragmented insights and hampering a unified organizational view. Manual processes rely on human intervention, making governance slow, error-prone, and unable to keep pace with real-time data flows. Delayed insights from time-consuming data integration and analysis result in missed opportunities and slow decision-making. Limited scalability makes it difficult to expand governance practices across growing data volumes and diverse data types. A reactive approach addresses governance issues after they occur rather than preventing them proactively, increasing risks.AI changes this by enabling automated, proactive, and scalable practices. Standardized metadata becomes the foundation for consistency, interoperability, and future-proofing across complex ecosystems. It supports semantic integration via ontologies and knowledge graphs and unified handling of structured and unstructured data. By adopting industry-standard metadata frameworks, governance ensures consistency across systems with uniform data descriptions, reducing ambiguity and enabling seamless integration. Interoperability with external ecosystems aligns with global protocols, facilitating collaboration with partners and compliance with regulatory frameworks. Scalability for future growth supports the integration of new data types, AI models, and governance requirements, ensuring long-term adaptability.

As data architectures evolve, the roles of data models and ontologies are foundational. Data models serve as internal-facing blueprints—much like a compass and map—for structuring and storing repetitive, key-dominated structured data. They define entities, attributes, relationships, and keys (e.g., through entity-relationship diagrams, data item sets, and DDL), providing vertical organization tailored to the enterprise’s operational needs.

In contrast, ontologies address the vast majority of corporate data—unstructured text, which often comprises 95% or more and remains underutilized due to its free-form, non-repetitive nature. An ontology is a formal specification of a shared conceptualization: the ultimate controlled vocabulary that captures concepts, entities, properties, relationships, and contexts within a domain. Built from one or more taxonomies (focused vocabularies pairing words with contextual meanings), ontologies provide horizontal, dynamic structure. They resolve key challenges in text, such as polysemy (e.g., “fire” as combustion, weapon discharge, or termination), negation (e.g., “did not have cancer”), and multilingual variations, by deriving context from surrounding content.

While data models are static and internal (enterprise-defined), ontologies are external-facing, drawing from common language usage to enable semantic disambiguation and believability. In many ways, the ontology is to text what the data model is to structured data—both unify chaos and provide essential governance. Without ontologies, text lacks context and becomes “pointless” or even “dangerous”; with them, unstructured data gains structure, lineage, and usability, integrating seamlessly with structured sources.

The Cognitive Engine revolutionizes data governance by leveraging artificial intelligence to automate, scale, and enhance governance processes. It integrates advanced AI technologies to create a dynamic and responsive framework. For automated and proactive governance, it offers real-time enforcement where AI continuously monitors data usage, dynamically adjusting data contracts, and ensures compliance without manual oversight. Policy automation embeds data contracts within data products shared with AI solutions, automating tasks like data quality checks, access controls, and compliance enforcement. Issue detection and resolution identifies potential governance issues proactively, such as data anomalies or security risks, and initiates corrective actions.

For scalable governance across complex environments, it provides a unified governance framework that manages both structured and unstructured data uniformly, eliminating blind spots and ensuring consistent policy application. Global scalability adapts to various regulatory environments, enabling organizations to scale governance practices globally without added complexity. Dynamic adaptation adjusts governance strategies in real-time based on data flow changes, new data sources, or evolving regulations.

Enhanced data lineage and provenance allow every data product to meticulously record its origin, movements, transformations, and interactions, providing multiparty observability and traceability. Immutable records maintain tamper-proof logs of multiparty data activities, essential for audits and compliance verification. Accountability ensures stakeholders know how data has been used and by whom.

Improved compliance and regulatory adherence include automated compliance monitoring to ensure adherence to regulations like GDPR, HIPAA, and CCPA by enforcing relevant policies in real-time. Consent management dynamically manages user consents and preferences, ensuring data usage aligns with individual rights.

Advanced AI technologies power this shift:

AI co-pilots assist human decision-making.

Large language models process unstructured content, grounded by ontologies to move beyond probabilistic patterns toward rule-based reasoning and inference.

Knowledge graphs enable reasoning over interconnected relationships.

AI agents autonomously handle monitoring, anomaly detection, and policy adaptation.

Metadata is especially critical in agentic AI systems, providing semantic context, dynamic rules, and interoperability for autonomous governance tasks. Ontologies enhance this by incorporating formal logic, property inheritance, and constraints, preventing nonsensical outputs and avoiding siloed semantic issues.

Benefits include efficiency gains by automating manual tasks, reducing time and resources for governance; faster insights with real-time data readiness for decision-making; risk reduction through proactive issue detection and resolution; cost savings from streamlined operations and reduced compliance penalties; and enhanced stakeholder trust with transparent, accountable data practices—critical for scaling operations and maintaining competitive edge.

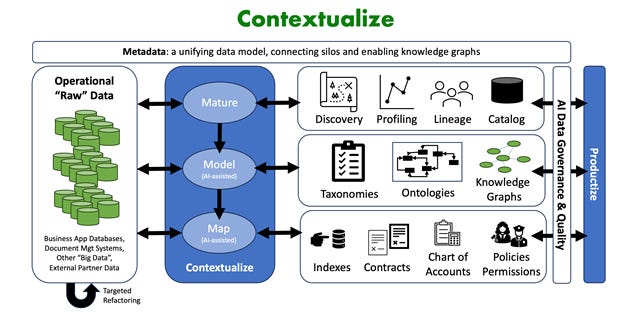

Contextualization: From Raw Data to Actionable Knowledge

Raw data holds limited value until refined. Contextualization adds relevance, structure, and meaning, preparing it for analysis and decision-making. The role of contextualization is to transform raw data into knowledge by adding layers of context, making it actionable for business insights. It bridges the gap between data collection and utilization, enabling organizations to derive value from their data assets.

Active metadata management drives continuous discovery, behavioral insights, automated enrichment, and semantic integration—bridging silos and creating a living knowledge framework. It involves active metadata management in contextualization for ongoing data discovery and profiling, behavioral metadata to capture data usage patterns, automated metadata enrichment with AI-generated tags, and semantic metadata for linking concepts through ontologies.

The Data Liquidity Protocol embeds cryptographic integrity early, ensuring provenance, compliance, and trustworthiness from the start. In contextualization, DLP applies proof of integrity to verify data provenance and quality during refinement, proof of compliance to embed privacy proofs from the outset, and proof of value to track early ROI indicators.AI-powered contextualization with the Cognitive Engine automates profiling and classification, pattern recognition and anomaly detection, taxonomy and ontology building, quality monitoring and remediation, and lineage tracking. This ensures data is continuously refined and enriched.

In this phase, data models and ontologies play complementary, essential roles. For structured elements, data models enforce precise relationships and storage schemas. For unstructured sources—like emails, documents, reports, or sensor narratives—ontologies (rooted in taxonomies) provide the critical semantic layer. Processes akin to Textual ETL scan raw text against ontology taxonomies to extract meaningful chunks (e.g., concepts or word-level elements with context), disambiguate terms, and place them in structured, horizontal databases—complete with lineage for traceability.

For example, in a medical context, an ontology might map “envarsus” to “medication” or “blood pressure” to “vital sign,” enabling accurate extraction even from free-form notes. Iterative refinement allows taxonomy adjustments (adding/deleting contexts) and quick reruns, turning vast unstructured volumes into analyzable insights rapidly. This not only resolves ambiguities but supports inference, governance, and integration with structured data.

The strategic importance for data leaders lies in unlocking data value by making raw data actionable, supporting compliance through built-in proofs, fostering innovation with enriched knowledge, and laying the foundation for productization. It serves as the foundation for productization by creating structured knowledge ready for packaging into data products.

By combining data models for structured precision and ontologies for unstructured context, contextualization unlocks holistic knowledge, supports compliance, fosters innovation, and lays the groundwork for reusable products. Examples include fraud detection in finance, where contextualized transaction data reveals patterns; personalized diagnostics in healthcare, where patient records are enriched with ontologies for better insights; and predictive maintenance in manufacturing, where sensor data is structured for proactive alerts.

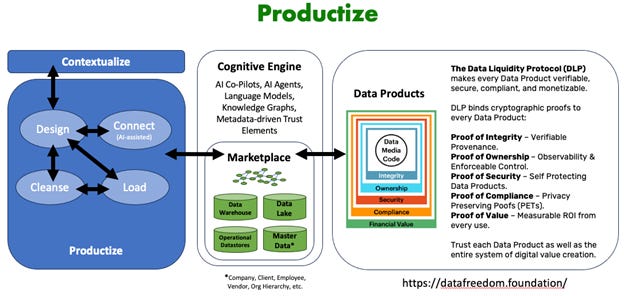

Productization: Turning Knowledge into Reusable Data Products

Productization bridges knowledge to tangible assets—reusable, scalable data products for internal workflows or external offerings. The role of productization is to package contextualized knowledge into standardized, reusable formats that can be easily integrated and scaled, turning knowledge into operational tools.

Active metadata management in productization maintains catalogs for discoverability, enforces policies by design, enables user-centric discovery, and tracks lifecycle and value realization. It involves metadata catalogs for product discovery, policy-embedded metadata for governance, user-centric metadata for accessibility, and value-tracking metadata for ROI measurement.

The Data Liquidity Protocol in productization certifies cleansed data with proofs of integrity, ownership, security, compliance, and value; supports interoperable design; and enables versioned deployment. It applies proof of ownership to enforce control, proof of security for protection, and proof of value for monetization readiness.AI-powered productization with the Cognitive Engine streamlines cleansing for quality assurance, product design for customizable and user-friendly formats, connectivity via open standards and APIs, and real-time updates for dynamic products.

The strategic importance for data leaders includes faster time-to-market with automated processes, consistent quality through AI oversight, flexibility for adapting products, and enhanced value for both internal and external use. This phase leads to the path to financial advantage by preparing products for monetization.

Outcomes include faster time-to-market, consistent quality, flexibility, and enhanced value—enabling operational dashboards, risk models, and marketplace offerings. This maximizes utilization, drives innovation, diversifies revenue, and supports agility.

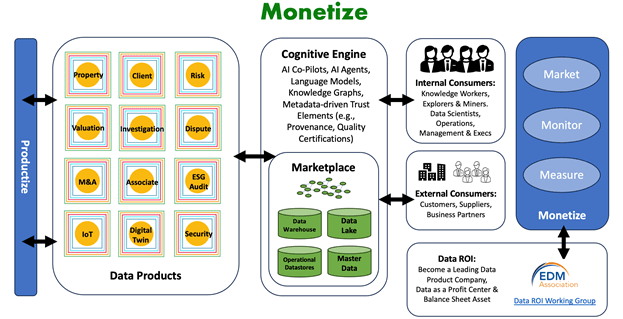

Monetization: Generating Financial Value from Data Products

Monetization realizes bottom-line impact through internal optimization and external revenue. The role of monetization is to convert data products into financial assets, creating new revenue streams and cost savings.

Active metadata management in monetization enables usage-based valuation, regulatory enforcement, marketplace readiness, and ROI analytics. It involves valuation metadata for pricing, compliance metadata for enforcement, marketplace metadata for discoverability, and analytics metadata for performance tracking.

The Data Liquidity Protocol in monetization supports secure, trustless transactions with dynamic pricing, compliant marketplaces, and subscription models—without exposing sensitive data. It applies all five proofs to ensure trusted monetization.

Metadata-driven trust in data marketplaces uses metadata to build confidence through verifiable proofs, enabling secure exchanges.AI-powered monetization with the Cognitive Engine facilitates internal gains like process automation, risk mitigation, and decision enhancement; and external models like marketplace sales, subscriptions, and licensing.

Key metrics for measuring ROI and financial impact include revenue from data products, cost savings from efficiency, time-to-insight reductions, customer retention improvements, market expansion opportunities, and metadata quality/completeness scores.

The strategic importance for data leaders is in turning data into a profit center, maximizing ROI, and driving growth through monetized assets.

Real-world examples include subscription-based risk models in finance, personalized recommendations in retail, predictive analytics licensing in healthcare, and consumer insights marketplaces.

Case Studies: Metadata-Driven Governance in Action

To illustrate the practical application of Knowledge Governance, consider these real-world examples across industries, demonstrating how the framework delivers trust, scalability, and financial returns in complex ecosystems.

Financial Services: Real-Time Risk Management with Agentic AI

In a large financial institution, traditional governance struggled with siloed transaction data and manual compliance checks, leading to delayed risk assessments and high operational costs. Implementing Knowledge Governance, the organization used the Cognitive Engine for contextualization, applying ontologies to unstructured fraud reports and data models to structured transactions. Active metadata tracked lineage, while DLP embedded proofs of integrity and compliance.

During productization, AI cleansed and packaged data into reusable risk models, with metadata catalogs enabling discovery. Monetization involved internal use for real-time fraud detection, reducing losses by 25%, and external licensing to partners, generating $8M in annual revenue. Agentic AI agents autonomously monitored anomalies, accelerating assessments by 40% and cutting costs by 30%. This not only enhanced compliance with regulations like CCPA but also built trust through immutable provenance, turning data debt into a competitive asset.

Retail: Metadata-Enhanced Consumer Insights Marketplace

A global retail chain faced data overload from customer interactions across online and in-store channels, with siloed systems hindering unified views. Knowledge Governance transformed this through contextualization, using AI to map customer data via taxonomies and ontologies, enriching profiles with behavioral metadata. DLP ensured proof of ownership and security, preventing data leaks.

Productization created scalable insight products, like personalized recommendation engines, with AI-designed interfaces and API connectivity. Metadata enforced policies and tracked value. For monetization, the chain launched an internal dashboard for inventory optimization, saving $5M in costs, and an external marketplace for anonymized insights, generating $10M annually. Engagement boosted 25% via metadata-driven discoverability, fostering collaborations with suppliers and demonstrating measurable ROI through key metrics like customer retention.

Healthcare: Metadata-Driven Patient Governance and Analytics Licensing

In a healthcare network, compliance risks from regulations like HIPAA and fragmented patient records limited innovation. Knowledge Governance addressed this with contextualization, leveraging ontologies for unstructured medical notes (e.g., disambiguating “fire” in contexts) and data models for structured vital signs. The Cognitive Engine automated lineage tracking, while DLP provided proofs of compliance and value.

Productization packaged knowledge into patient governance tools, with AI ensuring quality and interoperability. Metadata supported dynamic contracts. Monetization included internal use for personalized diagnostics, improving outcomes by 15% and cutting violations by 50%, plus licensing anonymized analytics to research firms for $5M in revenue. This enabled secure data sharing in multiparty ecosystems, enhancing trust and positioning the network as a leader in data-driven care.

Supply Chain: Optimizing Logistics with Decentralized Insights

In a multinational supply chain network, stakeholders struggled with data silos and lack of trust in shared information. Knowledge Governance facilitated contextualization by AI mapping logistics data through ontologies for unstructured reports and data models for inventory. DLP embedded proofs for secure sharing of insights, not raw data.

Productization created reusable optimization products, with metadata for interoperability. Monetization involved internal efficiency gains, reducing costs by 20%, and external licensing via decentralized marketplaces, adding $7M in revenue. This decentralized approach drove collaboration, innovation, and agility, compounding data value in the knowledge economy.

These cases highlight how Knowledge Governance turns challenges into opportunities, delivering financial advantage through AI, metadata, and DLP.

The Imperative of Governance, Security, and Trust

Robust governance and security are non-negotiable amid breaches, privacy risks, and regulations like GDPR, HIPAA, and CCPA. The imperative stems from the need to protect data integrity while enabling innovation. Knowledge Governance builds trust through proactive controls, immutable provenance via blockchain-integrated metadata, and automated compliance, ensuring data remains compliant, secure, and trustworthy throughout its lifecycle.

Embracing this for future growth distinguishes leaders from laggards, driving innovation, operational excellence, and sustainable financial growth. It offers a comprehensive framework that transforms raw data into strategic knowledge assets and significant financial advantage.

Future Outlook

As the data-driven economy evolves, metadata will play an increasingly pivotal role in Knowledge Governance. Emerging technologies will elevate metadata from a supportive layer to a transformative force, enabling decentralized, secure, and interoperable data ecosystems.

Decentralized metadata frameworks, enabled by Web3, will allow distributed repositories for global interoperability, aligning with DLP. Blockchain-based provenance tracking offers tamper-proof records, with smart contracts automating policies for real-time enforcement.AI-driven metadata evolution, via generative AI and LLMs, will autonomously enrich metadata, capturing nuanced relationships for enhanced knowledge graphs and agentic systems, accelerating contextualization and productization.

Metadata as a universal language will enable seamless integration across platforms, industries, and jurisdictions, with standardized protocols ensuring data products remain accessible, compliant, and valuable.

The Data Liquidity Protocol will redefine governance and monetization, shifting from centralized control to decentralized ecosystems. DLP’s impact includes decentralized data marketplaces for trading verifiable insights, fostering trust and new business models; AI governance by design, ensuring models use trusted data for transparency; and data as a service, empowering owners to license on their terms with enforced rights.

For example, in supply chains, DLP allows sharing insights while maintaining control, enhancing collaboration and efficiency. Organizations adopting DLP will transition from defending data to compounding its value, preparing for a data-driven future where data drives strategic advantage and growth.

Call to Action

Senior data leaders must champion Knowledge Governance to turn data into a profit center, maximize ROI, ensure compliance, and drive innovation. This framework integrates AI, metadata, and emerging protocols to transform raw data into monetizable assets—positioning organizations for a data-driven future.

As data continues to be a pivotal driver of business success, the time to act is now. By adopting Knowledge Governance, leaders can capitalize on financial opportunities, safeguard assets with automated governance, and demonstrate clear returns. It represents a paradigm shift, moving beyond traditional management to a holistic approach integrating AI, governance, and monetization. For adopters, the potential is clear: a future where data drives strategic, operational, and financial excellence, unlocking its full spectrum as a dynamic engine of growth and profitability.

For a deeper dive into this topic and related material, check out our 4 whitepapers:

· The Knowledge Governance White Paper defines the strategic imperative: turning data into knowledge and knowledge into financial advantage.

· The Data Liquidity Protocol (DLP) White Paper details the technical foundation: how governed Data products with standardized security and integrity envelopes provide cryptographic proof of ownership, integrity, provenance, security, compliance, usage, consent, and value.

· The Digital Rule of Law (DRoL) White Paper outlines the legal foundation: enforceable digital property rights that bind every data product and data transaction to its rightful owner.

· The Data Profit Center (DPC) White Paper brings these frameworks together in practice. It translates trust into measurable return. The DPC as the first operational step in the Knowledge Governance framework.

About the Contributors

Derek Strauss (Gavroshe USA, Inc.) and Alan Rodriguez (Data Freedom Foundation) collaborate on frameworks uniting AI and traditional governance. The Data Liquidity Protocol, stewarded neutrally by the Foundation, provides cryptographic foundations for secure, compliant data sharing and monetization.

Derek Strauss

Founder and Chairman of Gavroshe, a consulting company of 30+ years, which specializes in planning and implementation of multi-year Enterprise Transformation Programs, utilizing AI, and Data & Analytics.

Former Chief Data Officer at TD Ameritrade for approximately 5 years; responsible for building the Office of the CDO from scratch; this entailed establishing enterprise capabilities in Advanced Analytics and Data Science, Data Governance, Data Architecture and Enterprise Data Asset Development.

Alan Rodriguez

Founder of Data Freedom Foundation, an open-source, non-profit standards body creating revolutionary Consent and Privacy Technologies.

Invented and coded first-generation payment platforms. Created first-generation global supply chain platforms. Invented and created first-generation marketing, preference centers, and customer data management platforms. Invented the Data Liquidity Protocol to secure and control the uses of our data by others after being shared.