THE DATA STACK - GOOD NEWS/BAD NEWS

THE DATA STACK - GOOD NEWS/BAD NEWS

By W H Inmon

Dan Linstedt

Cindi Meyersohn

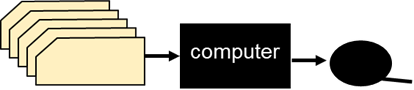

Technology began in the simplest manner. In the beginning there was a computer, some input and some output. The input was usually in the form of punched cards and the output was placed on a magnetic tape file.

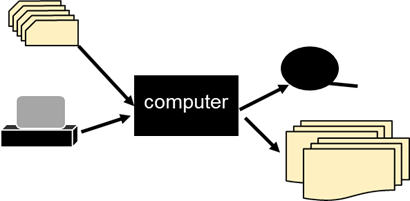

Very quickly (as far as evolutions go) reports were written and input from a terminal became a possibility. With these basic components the IT organization began to blossom. Applications started to spring up like dandelions in the springtime.

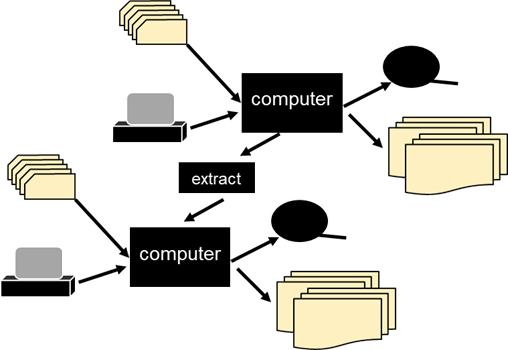

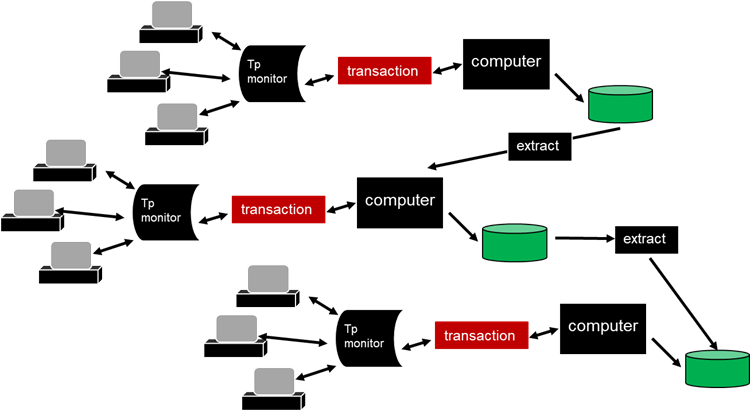

With the proliferation of applications came the innocent extract program. The extract program read data in one place and transferred the data to another place. There was a need to share data from one application to another and its was thought that sharing of data was as simple ae moving data from one application to the next. Thus borne was the extract program.

The volume of data began to cascade. The magnetic tape file was an improvement over the punched card. But the magnetic tape file required that large amounts of data be read sequentially. The magnetic tape file brought with it an overhead of processing that was both onerous and unnecessary. Soon there was the disk. With disk storage data could be accessed directly.

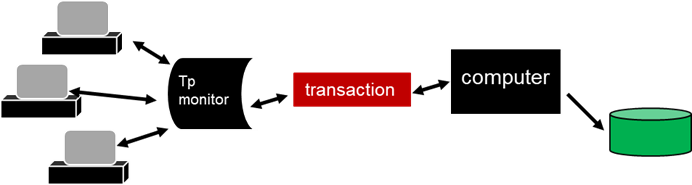

The ability of disk storage to directly access data led to transaction processing. The network accessing the disc storage data base was managed by a teleprocessing monitor. The disc storage data base was managed by a high performance data base management system.

The advent of transaction processing opened the door to the usage of the computer into the day to day business of the corporation. With transaction processing came ATM machines, airlines reservation processing, bank teller processing, and so forth.

Once the door to transaction processing was opened, the computer exploded into the world of business. The computer went from being a useful commodity to being at the center of business activity. If the transaction processor went down, business immediately suffered.

Soon data was being extracted from one source to another, creating a bewildering avalanche of data.

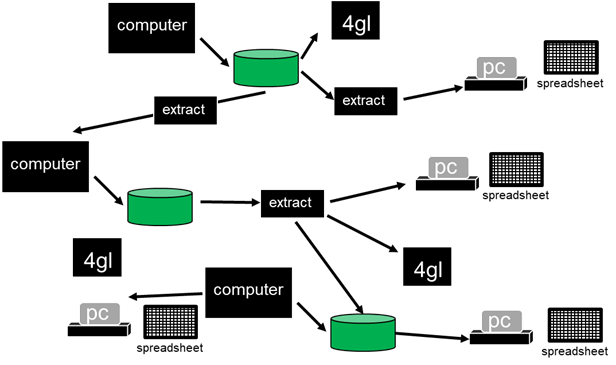

To add to the complexity, the personal computer and the spreadsheet made its appearance. The personal computer and the spreadsheet changed the dynamics of the control of data. Once computer processing was controlled by the IT organization. With the introduction of the personal computer and the spreadsheet, the end user could own and manage their own data without the interference of the IT organization.

To add to the complexity, the personal computer and the spreadsheet made its appearance. The personal computer and the spreadsheet changed the dynamics of the control of data. Once computer processing was controlled by the IT organization. With the introduction of the personal computer and the spreadsheet, the end user could own and manage their own data without the interference of the IT organization.

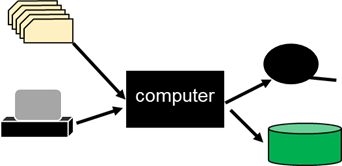

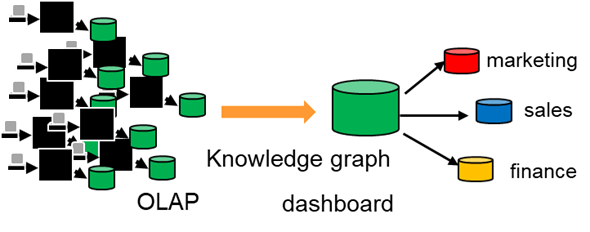

With enterprise data a reality inside a data warehouse, different functional departments wanted their own customized data in a data mart. Marketing, sales, finance and others wanted to take the enterprise data and tailor it to their own purposes.

OLAP and dashboards made their appearance.

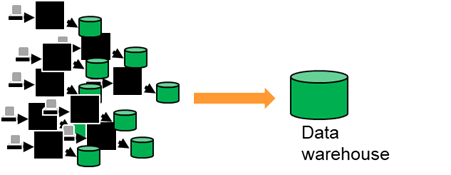

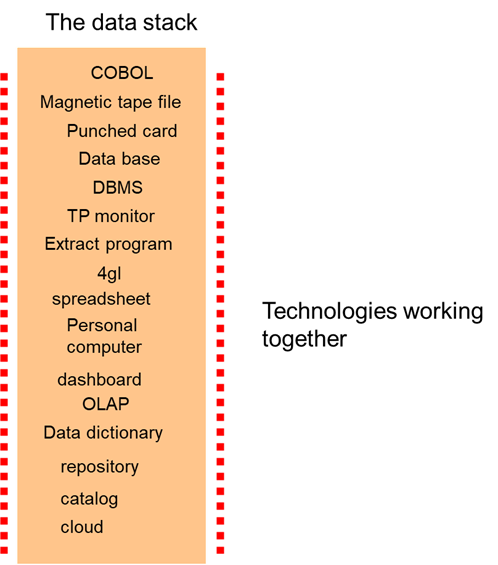

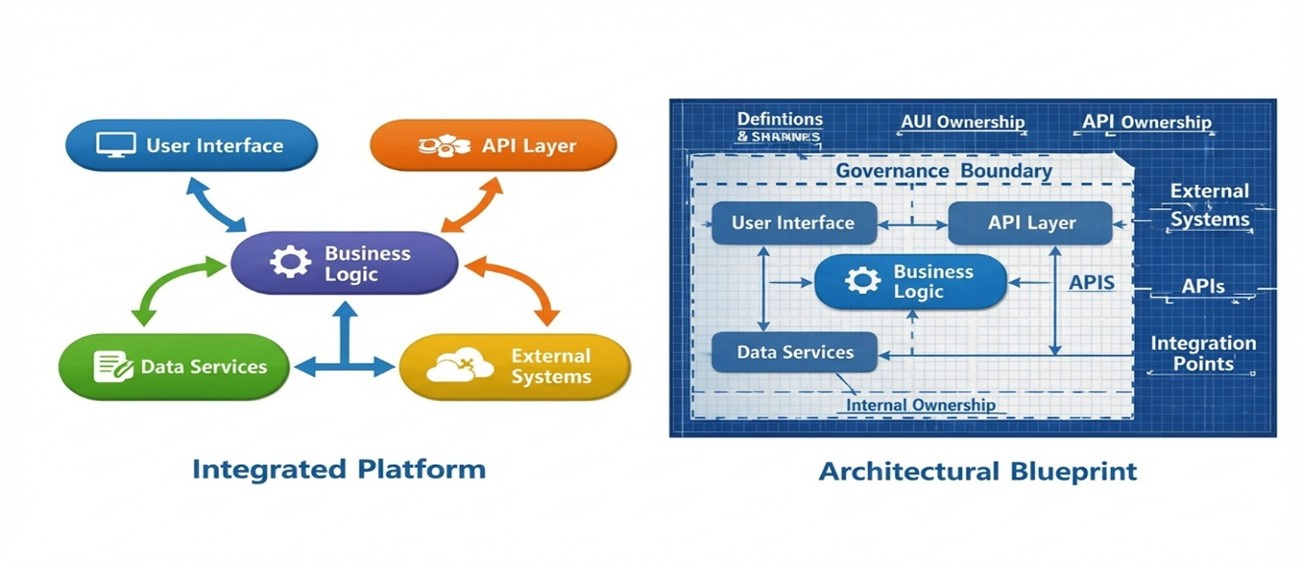

Thus borne was the data stack. Any one vendor did not have a complete solution to all of the information needs of the organization. Instead, multiple vendors and their various technologies were needed in order to satisfy the many technical needs of the organization.

Placing those different technologies on top of each other was known as the “data stack”

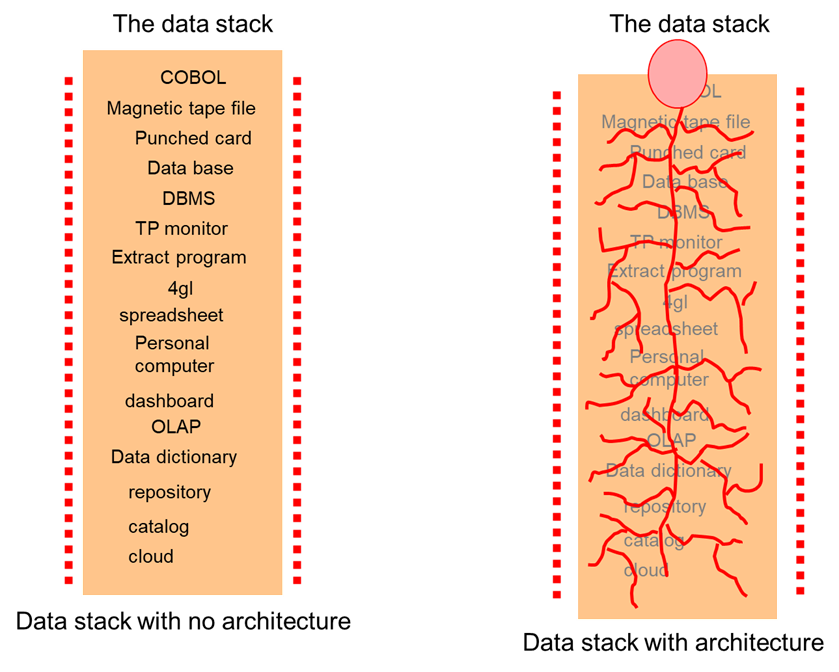

.The data stack appeared to satisfy the myriad needs of the technicians in coping with the avalanche of data. But the data stack had one major drawback. Unless there was an overlying architecture that sat beneath the stack, the stack produced conflicting and incorrect information.

In order to make the data stack successful it was necessary to unify the stack with an architecture.

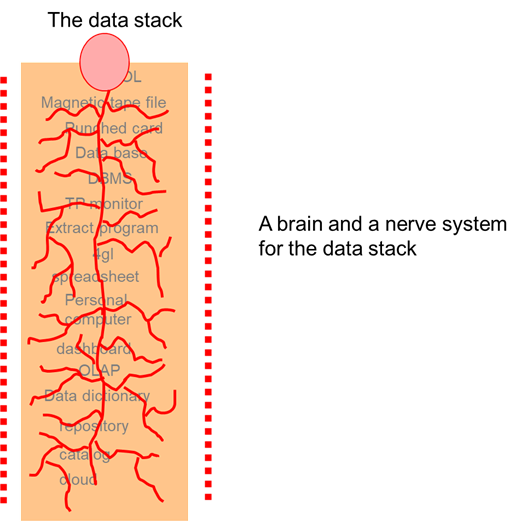

Analogically, the data stack was the human body and the architecture was the brain and nerve system of the human body. The human body may be strong and flexible, but without a brain and a nerve system, the human body was worthless.

The same is true of the data stack. The data stack may provide technology needed to store and manage data. But if the data residing in the data stack is not believable, then the best data stack in the world does nothing to solve the problems facing the corporation.

Organizations found that in order to be successful that it was not sufficient to have just a data stack. Instead a data stack unified by an architecture of data was a necessity.

There is a a simple but uncompromising point to be made: a data stack without architecture cannot be trusted. That observation has aged well, but the environment around it has changed in ways that make the consequences more immediate and more visible. What once surfaced slowly now appears quickly. What once remained internal now emerges under scrutiny. And what was once considered a technical shortcoming is increasingly interpreted as a leadership failure.

The need to continue this discussion is not academic. The modern narrative surrounding platforms has quietly shifted responsibility away from design and toward procurement. Architecture is no longer debated. It is assumed. That assumption now lives inside funding decisions, operating models, and executive expectations in ways that did not exist when the data stack first took shape.

This shift deserves closer examination.

When Platforms Are Treated as Architecture

Modern platforms are no longer positioned as components within a system. They are presented as the system itself. The promise is rarely stated outright, but it is clearly implied: scale replaces structure, automation replaces intent, and integration replaces accountability. Architecture becomes something the platform is assumed to contain rather than something leadership must deliberately establish.

The belief persists because it appears reasonable. Platforms do perform work that once required careful design. They connect sources quickly. They absorb change. They deliver results faster than any prior generation of technology. The conclusion feels earned. If the platform handles complexity, architecture must already be embedded.

But platforms do not define meaning. They move data. They do not establish authority. They process transactions. They do not reconcile conflicting definitions of revenue, risk, customer, or product across business lines.

That work belongs to architecture

.The problem is not that platforms fail. It is that they succeed in ways that obscure responsibility. They move data efficiently without establishing who owns meaning, which transformations are authoritative, or how disagreement is resolved when results conflict. Those questions do not disappear. They simply resurface later, under conditions where time, patience, and optionality no longer exist.

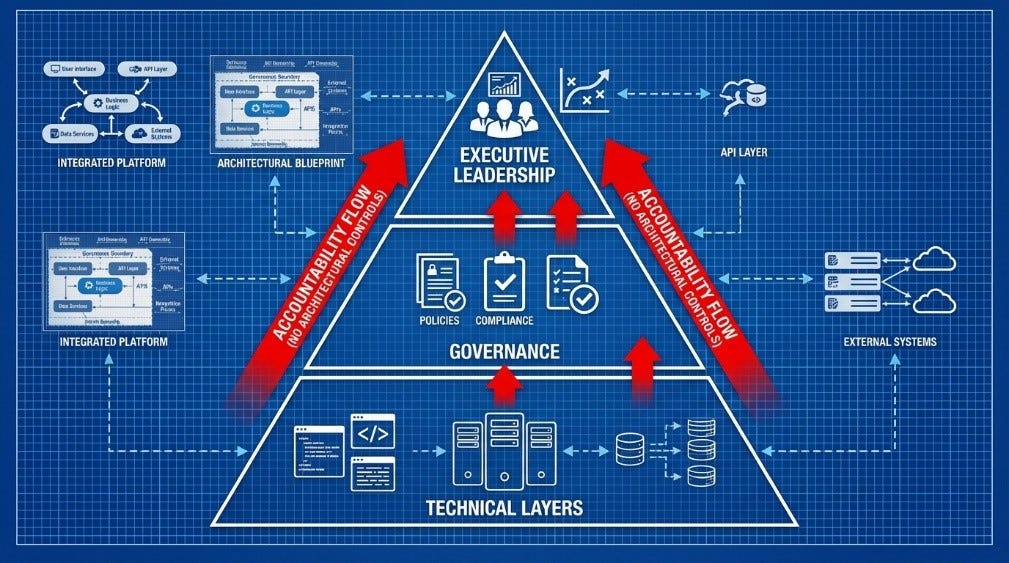

At that moment, the distinction becomes clear. A platform can coordinate activity. Only architecture can coordinate accountability.

Speed Has Changed the Cost of Being Wrong

Earlier generations of data systems failed slowly. Inconsistencies accumulated quietly. Reports disagreed, but the impact was often localized. Time absorbed architectural weakness.

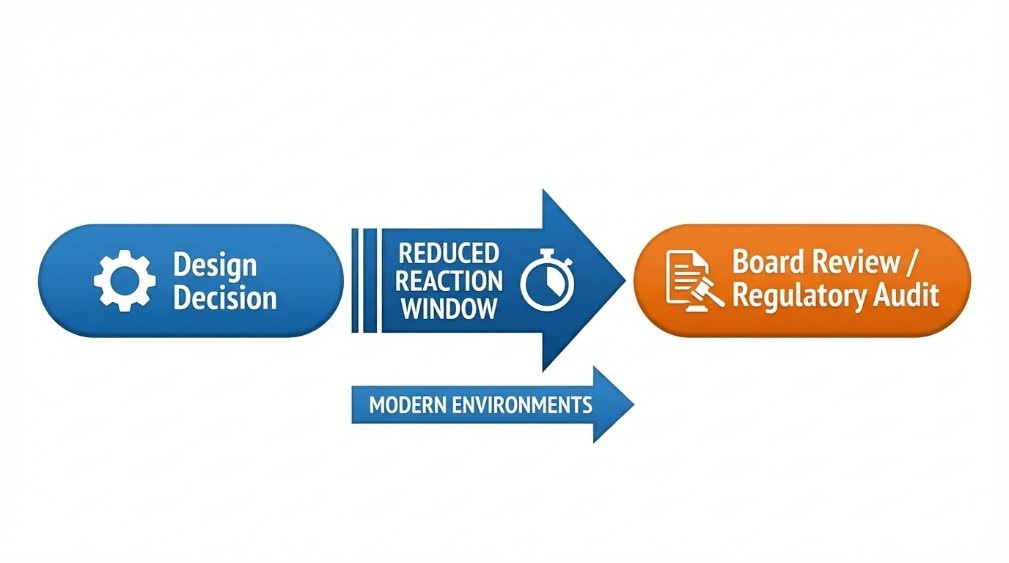

That buffer no longer exists. Cloud scale and automated pipelines compress the distance between design decisions and business exposure. What is incorrect propagates as quickly as what is correct. By the time disagreement is noticed, it has already influenced planning, funding, external commitments, or regulatory posture.

Speed magnifies consequences.

In regulated industries, regulators increasingly expect traceability and demonstrable lineage. In public companies, boards expect confidence in reported metrics. In highly competitive markets, strategic decisions are made on analytics that assume internal consistency.

When architecture is weak, the stack becomes fragile under stress.

This acceleration changes the nature of executive risk. The question is no longer whether the system works today, but whether it can explain itself tomorrow. When answers are required quickly, architecture is either present or it is not. There is no retroactive substitute.

Consider a common enterprise review where two leadership teams arrive with materially different numbers drawn from the same platform. The platform performed exactly as designed. The architecture did not. At that point, accountability does not remain distributed. It moves upward.

Understanding this shift requires examining the role of the architect more closely.

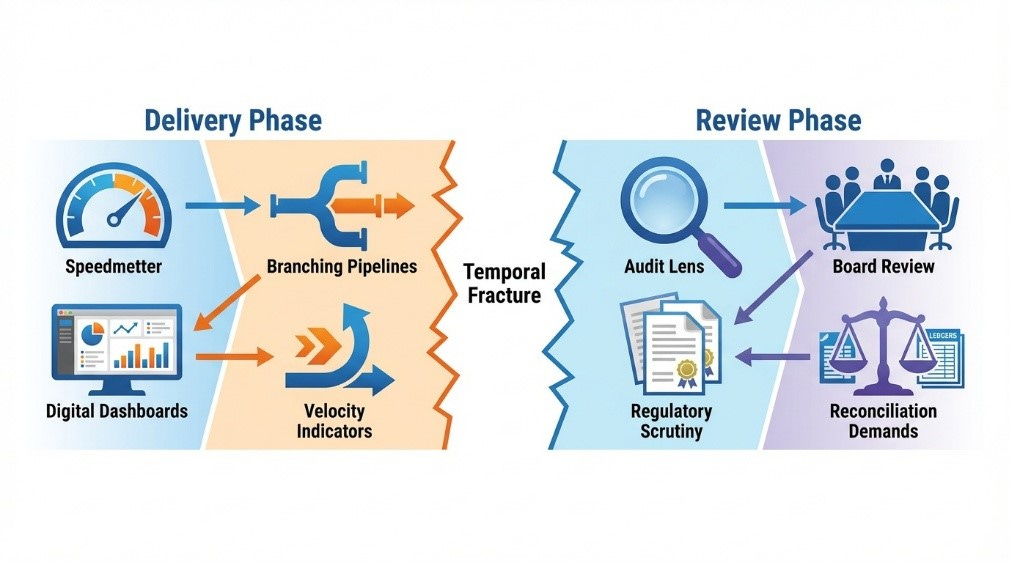

The Architect’s Role and the Temporal Fracture

Architecture is evaluated retrospectively.

It is built prospectively.

This temporal fracture is subtle but powerful. Architecture decisions are made during delivery, under schedule pressure, funding constraints, and competing priorities. They are judged later, when the system is examined under audit, board review, regulatory inquiry, or strategic reassessment. The individuals building the system rarely face scrutiny in the moment. The scrutiny arrives after the system has already shaped business decisions.

The absence of architecture is invisible during delivery.

It becomes visible during review.

During delivery, velocity dominates. Milestones are met. Pipelines execute. Dashboards render. The stack appears successful because it is functioning. There is no immediate signal that meaning is fragmented, ownership is unclear, or lineage is incomplete. In fact, the absence of architectural rigor may even appear efficient. Less friction. Fewer constraints. Faster output.

The problem emerges when explanation is required.

Architecture exists not merely to move data, but to define authority, preserve history, reconcile disagreement, and sustain meaning over time. When those elements are not deliberately designed, the stack still operates. What is missing is the institutional memory that proves how and why it operates.

How Executives Recognize Architectural Drift

Executives rarely see architecture directly. They see its effects. Recognizing architectural weakness requires observing patterns rather than artifacts.

Architectural drift is often present when:

Business definitions vary subtly across reports with no clear authority to reconcile them.

Transformation logic resides inside pipelines without documented ownership.

Platform selection discussions are framed as architectural decisions.

Architects defend tooling choices more strongly than business meaning.

Questions about lineage produce explanations of processing rather than explanations of intent.

Resistance to executive direction is rarely overt. It is often expressed as technical inevitability. “The platform requires this.” “The stack works this way.” “The tool enforces this structure.” When the physical stack is treated as destiny, architecture has quietly yielded authority to implementation.

Another signal is silence.

When architectural review forums focus on performance, scalability, or integration mechanics but do not address ownership of meaning, reconciliation boundaries, or definitional authority, architecture is being shaped by the stack rather than governing it.

When Architecture Is Defined by the Stack

Designing based on the selected stack is not inherently wrong. Every architecture must operate within technical constraints. The fracture occurs when the constraints define the structure rather than inform it.

When the stack dictates architecture:

Data models mirror physical storage patterns rather than business semantics.

Integration flows reflect tooling capability instead of enterprise coherence.

Governance becomes documentation layered on top of decisions already embedded in code.

Architectural standards follow platform features rather than executive intent.

At that point, architecture is reactive. It describes what has been built rather than directing what should be built.

True architecture begins with purpose. It defines what constitutes authoritative information, how it persists across change, and who is accountable when definitions evolve. The stack should implement those decisions, not determine them.

Controls Executives Can Put in Place

If architecture is judged retrospectively, executive control must be applied prospectively.

First, architectural authority must be clearly separated from platform selection authority. Tooling decisions must not substitute for definitional decisions. Executives should require explicit articulation of business meaning, ownership, reconciliation rules, and lineage expectations before stack-specific design begins.

Second, architectural reviews must examine intent, not only implementation. Reviews should ask:

Who owns this definition?

How is historical change preserved?

Where are reconciliation boundaries defined?

How is disagreement resolved?

What evidence exists that this meaning can be reproduced under examination?

These are architectural questions. They are not tooling questions.

Third, architectural artifacts must exist independently of the stack. If the architecture cannot be described without referencing specific platform features, then the stack has already shaped the design. Architecture should survive tool replacement. If it cannot, it was never architecture.

Fourth, escalation pathways must be explicit. When definitional conflict arises, there must be a defined authority empowered to resolve it. Without that mechanism, architectural decisions default upward only after conflict becomes visible.

Finally, architectural compliance must be evaluated at governance gates, not after production release. Once pipelines are live and dashboards are adopted, reversal becomes politically and operationally expensive. Prospective enforcement is uncomfortable. Retrospective correction is destabilizing.

The architect’s role is therefore not merely technical. It is structural. Architects translate executive intent into durable information structures that persist beyond tooling cycles and delivery waves. When that translation is absent, the stack performs. When review arrives, explanation falters.

Even with controls in place, the ultimate burden of architectural weakness follows a predictable path.

Where Accountability Ultimately Lands

Architecture has always existed to answer questions that arise after decisions are made. It explains what data represents, how it was formed, and why it should be trusted over alternatives. When that explanatory chain is missing, accountability does not vanish. It relocates.

If ownership of meaning is not explicit, leadership inherits it. If reconciliation paths are undefined, governance absorbs the burden. If no one can demonstrate how results were produced, responsibility rests with those who approved the system that produced them.

This is not a failure of execution. It is the predictable outcome of assuming that modern platforms eliminate the need for architectural intent. The organization continues to operate. Reports continue to render. Decisions continue to be made. What changes is who must answer when those decisions are questioned.

The quiet cost of this assumption is not operational. It is institutional. Over time, trust in information erodes, not because individuals acted improperly, but because the system cannot prove itself under examination.

At that point, the absence of architecture is no longer theoretical. It becomes visible in escalations, audit inquiries, funding friction, and governance intervention. The stack remains. The illusion of control does not.

ABOUT THE AUTHORS

Cynthia (Cindi) Meyersohn is Chief Operating Officer of DataVaultAlliance Holdings and Founder of DataRebels LLC, where she leads strategy, operations, and global enablement for the Data Vault 2.1 ecosystem. With more than four decades in information technology spanning commercial and federal sectors, she is a Certified Data Vault 2.1 Instructor and enterprise architect who has designed and implemented large-scale, auditable data warehouse solutions in highly secure environments. She holds a Master of Science in Systems Engineering from The George Washington University and a Bachelor of Science in Information Systems from Strayer University. She currently resides in Richmond, VA with her family, including seven grandchildren.

Dan Linstedt is the inventor of Data Vault and a recognized authority in scalable data architecture and enterprise data integration. He has advised global organizations on building resilient, audit-ready data ecosystems that support analytics, compliance, and AI at scale. As an AI strategist and industry educator, Dan focuses on aligning modern data engineering practices with emerging intelligent systems. His work bridges disciplined data architecture with practical innovation in advanced analytics and automation.

Hi Bill, how do you only get one comment in over 2 weeks? This is insane. No wonder our industry segment is doing so poorly.

Wow, such an elegant explanation of architecture. The vision (or lack of it) will always supersede the technology.

Question for the authors: What's more important, the best technologies within the stack, or using a single stack for all layers? Would a true single tech stack make the logical architecture easier to see and manage? Is Microsoft the closest thing to single stack technolgy?

Thanks!

ZH